It’s been a vision of the future even since before the Jetsons, Knight Rider, or even I, Robot: a car which drives itself without much human input.

What Constitutes a Self-driving Car?

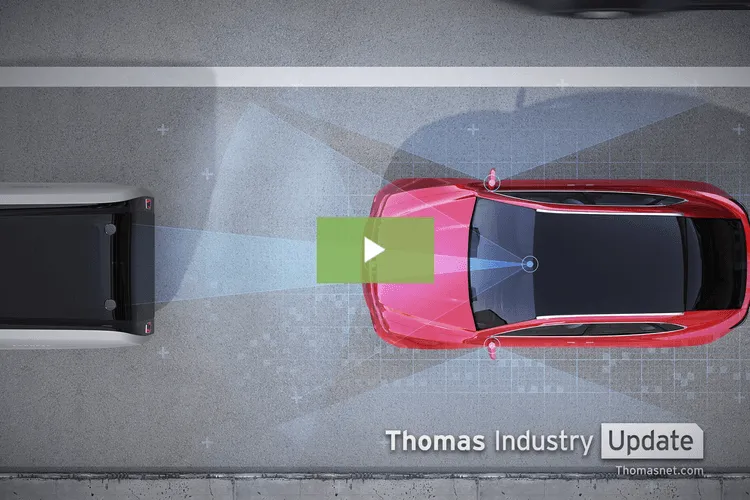

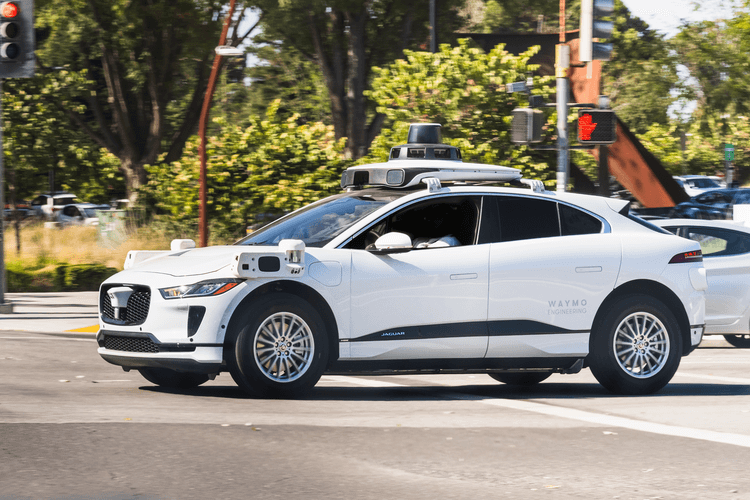

A self-driving car is defined by Synopsys as “a vehicle capable of sensing its environment and operating without human involvement.” Self-driving or autonomous cars are often equipped with various sensors and cameras and armed with algorithms to achieve an automated driving experience.

The Society of Automotive Engineers developed six levels to describe autonomous vehicles, classifying such cars from manual to full automation. In levels zero to two, humans drive or supervise the cars in action. In levels three through five, automated systems monitor the vehicles in their environment. Most companies have only developed as far as level two, but level 3, conditional automation, and level 4, high automation, exist. There are no cars on the market that have met the standards to be placed at level five, meaning there are no fully autonomous cars.

With little to no governmental oversight, many companies are pushing to create the consummate self-driving car, which has ultimately resulted in negligent driving across the country.

Hazards on the Highway: The Uber Accident

One such case of negligence by a company looking to advance their self-driving car occurred in 2018 in Phoenix, Arizona. During a pilot program test, a pedestrian was struck and killed by an Uber self-driving car. The car’s sensors encountered the pedestrian crossing outside of the crosswalk and did not determine in time what the object was or if the pedestrian was in the path of the car, which led to it crashing into the victim.

The human back-up driver, an independent contractor, did not intervene in time and was reportedly watching NBC’s The Voice at the time of the crash. The back-up driver was later charged with negligent homicide and, though prosecutors declined to file charges, Uber discontinued their pilot program in Arizona.

The National Transportation Safety Board noted several failures of Uber which culminated in the crash. Uber’s Advanced Technology Group had no corporate safety plan, no dedicated safety manager, and no safety manager at the time of the accident, reports The Verge. In fact, it has been reported the company had begun shirking safety protocols to speed up the testing period and reduce costs of research and development, downsizing the number of safety drivers from two to one per car. More saliently, the company disabled the emergency braking system and expected the human back-up driver to intervene.

Tesla Launches Full Self-Driving* (*But Not Really)

While the indictment may have some companies pausing their self-driving initiatives, it has not deterred automaker Tesla. In October 2020, Tesla introduced a “full self-driving feature” to their cars.

Toting it as a future feature for all Teslas, the feature allows the car to steer and accelerate, drive in urban and highway settings, and respond to stop signs and red lights — all without human aide. The automaker made the upgrade available to a select group of drivers for the time being, but this move has raised many concerns the company is treating their consumers as human test subjects and does not consider the safety of the consumer or the general public.

The automaker has already come under fire for their “Autopilot” program, currently in cars, which has been linked to three driving fatalities since its public release. The National Highway Transportation Safety Administration stated the agency is looking into more than a dozen incidents of misuse of the Autopilot technology — which is only similar to the aviation technology in name — though drivers often take fault for its misuse.

Tesla, Autoblog notes, is boasting on their website of the full self-driving capabilities of the program yet publishing in a small font that the drivers should monitor the car. Bryant Walker Smith, a University of South Carolina law professor who studies self-driving cars, said the move is toeing the line on misleading: “That leaves the domain of the misleading and irresponsible to something that could be called fraudulent.”

Steven Shladover, a research engineer at UC Berkeley, told AutoBlog: “This is actively misleading people about the capabilities of the system, based on the information I’ve seen about it.” Shladover continued: “It is a very limited functionality that still requires constant driver supervision.”

A coalition of industry leaders made up of Waymo, Uber, Ford, and others who are also creating autonomous cars also disagree with the launch, criticizing it on the grounds the car still depends upon a human driver.

According to the Washington Post, California vehicle codes state autonomous cars must be capable of “performing the dynamic driving task on a sustained basis without the constant control or active monitoring of a natural person,” and full self-driving mode testing requires a California permit. Tesla has not obtained or proven to meet the criteria of the permit.

The (Lack of) Rules and Regulations on Self-Driving

Perhaps more jarring than Tesla’s equivocations or their eagerness to continue in the face of negligence is that there is no federal legislation to oversee the development or testing of self-driving cars in the United States. While some states and cities have laws and ordinances on self-driving cars, there is not one established federal guideline to ensure safety for the public or the drivers of such cars.

Watchdog companies are pointing to federal government inaction and dereliction as the cause of self-driving safety hazards. The Associated Press reported Ethan Douglas, senior policy analyst for Consumer Reports, believes safety must be mandated by the government for companies to comply. “… Without mandatory standards for self-driving cars, there will always be companies out there that skimp on safety,” he said. Douglas continued: “We need smart, strong safety rules in place for self-driving cars to reach their life-saving potential.”

Uber has resumed autonomous vehicle testing in Pittsburgh, and Tesla’s Elon Musk has expressed his desire to expand the autonomous program and have a fleet of self-driving cars to use as taxis like Uber and Lyft. But prior to that, they may have to battle changing the public perception of the technology, as recent incidents are not helping the public opinion of self-driving cars. Nearly three in four Americans believe the technology is not ready for the public, and about 48% say they would not use an autonomous taxi or ride-sharing vehicle.

There is a long way to go on creating a self-driving car that meets the scientific and safety standards. Currently, there are many limitations on the capability of self-driving cars, including but not limited to: driving in urban settings, driving across bridges, driving in inclement weather, high-speed driving situations, vulnerability to hackers, and driving on roads without clear markings. Though they are supposed to be the technology of the future, the circumstances surrounding self-driving vehicles have people questioning the current reality of them.